Cloud services like AWS are incredible for scaling, and they come at a cost, especially when you’re working with Large Language Models (LLMs). When developing an AI-powered system, these costs tend to sit quietly in the back of your mind while designing, building, and deploying.

I was recently working on an AI-powered Email Phishing Rater. The goal was to create a system that analyses incoming emails and flags potential phishing attempts using a custom, lightweight, fine-tuned model, and plug it into any popular email provider as an add-on. For our first MVP, we shipped an add-on for Gmail.

The model is hosted on an inference endpoint on AWS SageMaker. It’s a common production choice for custom models (though not the only one; there’s also AWS Bedrock, self-hosted GPUs, or third-party providers). But regardless of where you host it, inference isn't free. Billing is tied to uptime or requests, and AWS doesn't care whether your product is in its alpha, beta or omega stage—it still bills.

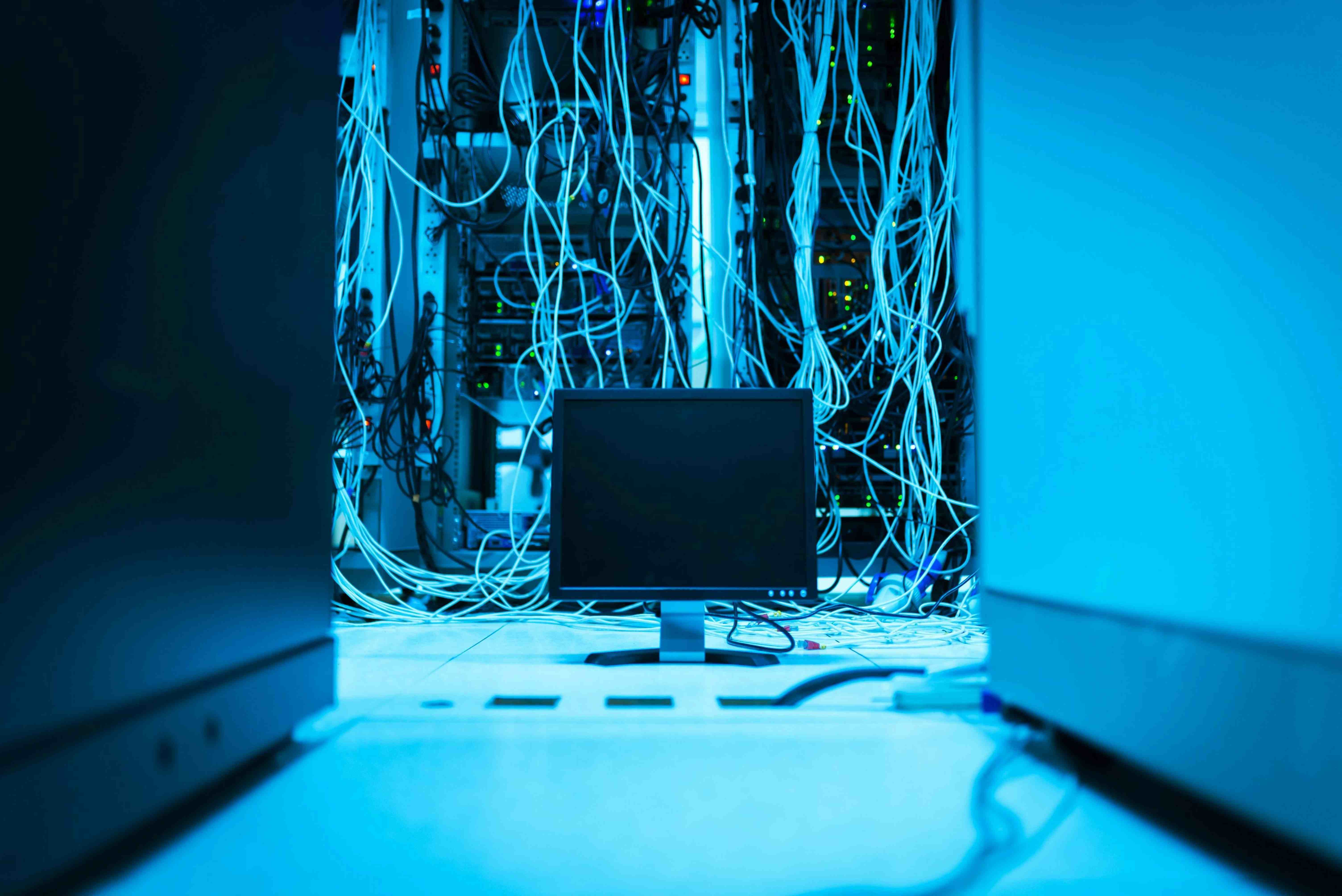

Basic AI-Powered System

When building a cloud-hosted AI-powered system, there are typically three main components:

The UI

Typically, this involves having a React/Next.js app served as a static site with a URL accessible to the user to connect via a browser. It could also be a CLI or an SDK. In our case, the UI is an add-on, so technically, I’m not providing a URL; Gmail already did for us.

The Backend Server

Here’s what interfaces between your users and your model. Whether you’re calling a frontier model (OpenAI, Anthropic) or your own fine-tuned model, this is where orchestration, auth, and business logic live.

The LLM Inference Engine

And lastly, this is where your model actually runs. Be it a frontier model or via managed API (like AWS Bedrock) or a custom model cloud-managed API (like AWS SageMaker).

While these are the building blocks of a typical AI-powered system, there can be variations depending on how complex your product gets.

Now that we have eliminated the UI offering, how about a Local cloud that mimics AWS architecture, providing a backend server with a api-url accessible remote i.e pluggable to our Gmail add-on on AppScript and an LLM Inference Engine at $0 cost (well, only local compute cost 🫣)

Our “Local Cloud Provider”

LLM Inference Engine

To handle LLM inference, we went with a lambda-style callable function from the backend.

Since our backend is written in Rust, in place of using AWS Lambda SDK, we used the fantastic PyO3 library. It lets us call directly into Python from Rust, with the option of calling only the function needed:

async fn invoke(&self, prompt: &str) -> Result {

let p: PyResult = Python::with_gil(|py| {

let inference = PyModule::import_bound(py, self.script_name.as_str())?;

let result = inference

.getattr(self.function.as_str())?

.call1((prompt,))?;

let result_dict = result.downcast::()?;

let label: String = result_dict.get_item("label")?.extract()?;

let score: f64 = result_dict.get_item("score")?.extract()?;

Ok(LocalInferenceResult { label, score })

});

let res: String = p?.try_into()?;

Ok(res)

}

This bridges directly into a Python script that handles inference, which roughly looks like this:

model = AutoModelForSequenceClassification.from_pretrained("merged-model")

tokenizer = AutoTokenizer.from_pretrained("merged-model")

inputs = tokenizer(prompt, return_tensors="pt", truncation=True, padding=True)

with torch.no_grad():

outputs = model(**inputs)

We initiate our model once [IMPORTANT] and reused across calls.

And now we have our API response to feed to our UI.

The Orchestrator

Nothing feels better than deploying with a single command.

In production, this is usually handled by something like GitHub Actions. A typical workflow would be able to trigger building Docker images for each repository, ensuring its artefacts are up to date with the latest commit.

Idly, each of these Docker containers would be hosted on services like AWS ECR, and because they are within the same cloud, wiring them together won’t be much of a hassle.

For this project, we have multiple servers:

- A REST api

- An RPC server (only reachable via the REST layer)

We would be replicating this setup using Docker Compose.

Docker Compose gives our container assess to shared environmental variables and secrets (as Github or AWS would offer), service discovery between containers, as well as port mapping and resource constraints

To top it all off, shutting everything down with just one command!

The "CloudFront" Layer

There’s an elephant in the room

How does Gmail (outside my local ecosystem) access my services? It’s definitely not going to resolve

api_server:8080, it needs a publicly accessible URL.

And here's where ngrok comes in. With just a command, we now have our test "CloudFront" URL for our server

ngrok http 8080

You get a public HTTPS endpoint that tunnels directly to your local server. For development and testing, it behaves like a lightweight stand-in for something like CloudFront or an API Gateway.

And there you have it, a “local cloud” setup that mirrors production architecture closely enough to be useful, while avoiding per-request costs during development.

Not truly free, but controlled. And sometimes, that’s exactly what you need.